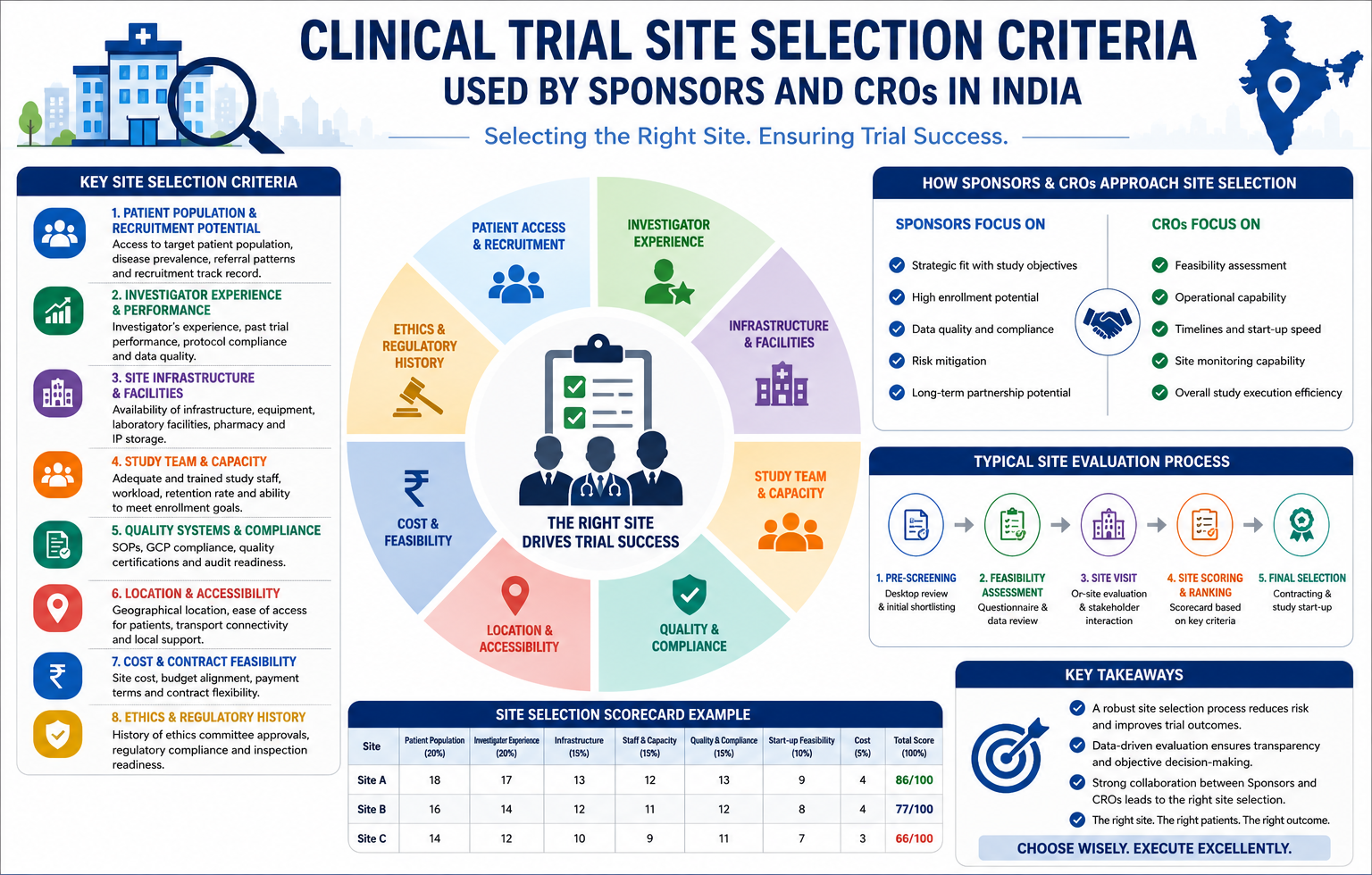

High Performing Clinical Trial. Typically, when selecting trial sites, sponsors and CROs rely on historical enrollment data, investigator reputation, or KOL status. While these factors are important, they are often insufficient predictors of actual study performance. In practice, I have observed that relying solely on such indicators can be misleading. For example, in my 15+ years managing global trials across pharma and biotech, high-enrolling sites have sometimes derailed timelines due to protocol deviations, inconsistent monitoring compliance, and poor data quality. On the other hand, mid-tier sites have often demonstrated exceptional operational stability. In fact, they consistently meet enrollment targets, minimize protocol deviations, and pass audits with minimal findings. As a result, a more balanced and data-driven approach to site selection is essential. Therefore, sponsors should evaluate both quantitative metrics and operational quality indicators to ensure reliable trial execution. click here

The difference lies in how performance is defined. A high-performing site isn’t one that enrolls fastest. It’s one that delivers predictable execution: consistent data capture, reliable source documentation, compliance with Good Clinical Practice (GCP), and low screen-fail ratios. These attributes directly impact cost and timeline predictability.

These are not incremental efficiencies—they compound across multi-site trials and translate into 12–18% faster study execution and 10–15% cost savings in start-up and monitoring spend.

This article breaks down the operational metrics that separate high-performing sites from the rest, based on real-world execution across 40+ trials in India and globally. You’ll find comparative data, actionable checklists, and hard lessons from trials where site selection made or broke delivery.

Defining a High-Performing Site: Beyond Enrollment Numbers

Enrollment velocity is the most cited metric in site evaluation. However, in practice, it is the least reliable as a standalone KPI. This is because sites that rapidly screen patients may also have high screen-fail ratios due to overly aggressive recruitment tactics or inadequate pre-screening. Moreover, such approaches can compromise overall efficiency. On the other hand, some sites may meet enrollment targets but still generate protocol deviations. As a result, these deviations can cascade into data queries, monitoring delays, or even site-specific holds. Therefore, relying solely on a single KPI can lead to misleading performance assessments.

True site performance is multi-dimensional. It spans four operational pillars:

- Enrollment Efficiency – Speed and quality of subject recruitment.

- Data Quality and Timeliness – Accuracy, completeness, and source-to-CRF consistency.

- Protocol Compliance – Adherence to visit windows, SOPs, and GCP requirements.

- Operational Resilience – Team stability, monitoring responsiveness, audit readiness.

Below is a comparison of site performance across these dimensions using anonymized data from Phase 2–3 oncology, metabolic, and rare disease trials in India (n = 68 sites, 2018–2023).

Key Operational Metrics That Matter to Sponsors

Let’s break down each performance dimension with real-world operational insights.

1. Enrollment Efficiency: It’s Not Just Volume

High volume ≠ high efficiency. A site screening 50 patients/month sounds impressive—until you learn that 35 fail eligibility. That’s 35 patients consuming lab resources, investigator time, and CRA oversight, only to yield 15 randomized subjects.

Efficiency is measured by:

- Screen-to-Randomize Ratio (S:R) – Should be ≤ 1.6 for most therapeutic areas. In rare diseases, ≤ 2.0 is acceptable.

- Screen-Fail Root Cause Analysis – Sites that track why patients fail (e.g., lab values vs. comorbidities) can adjust screening SOPs proactively.

- Recruitment Channel Yield – Referral networks, digital campaigns, or hospital databases—top sites track cost-per-qualified lead by channel.

2. Data Quality: The Hidden Time Sink

Poor data quality delays database locks, increases monitoring costs, and raises query volumes. Sponsors often treat data issues as CRA responsibilities. However, root causes are typically site-level. In most cases, these issues originate from operational inefficiencies or resource limitations within the site. For example, gaps in staff training, patient management, or protocol adherence can significantly impact performance. As a result, site-specific challenges often drive overall trial delays and quality concerns. Therefore, identifying and addressing these root causes at the site level is essential for sustainable improvement.

- Inconsistent source documentation – Entries missing date/time, initials, or lab units.

- Delayed eCRF entry – Data entered >72 hours post-visit increases recall errors.

- Unresolved queries – Sites not assigning a dedicated clinical research coordinator (CRC) to query resolution.

Top sites implement:

- Daily eCRF reconciliation post-visit.

- Source document templates aligned with CRF fields.

- Weekly internal audits before monitoring visits.

3. Protocol Compliance: The Audit Risk Multiplier

Protocol deviations aren’t just data quality issues—they’re regulatory exposure. A single major deviation can trigger a site-specific clinical hold. CDSCO and FDA inspections focus heavily on deviation trends and corrective actions.

High-performing sites:

- Conduct pre-visit planning—reviewing eligibility, labs, and visit tasks 24–48 hours in advance.

- Use visit checklists aligned with the protocol schedule.

- Hold weekly team huddles to review deviations and update SOPs.

4. Operational Resilience: The Unseen Enabler

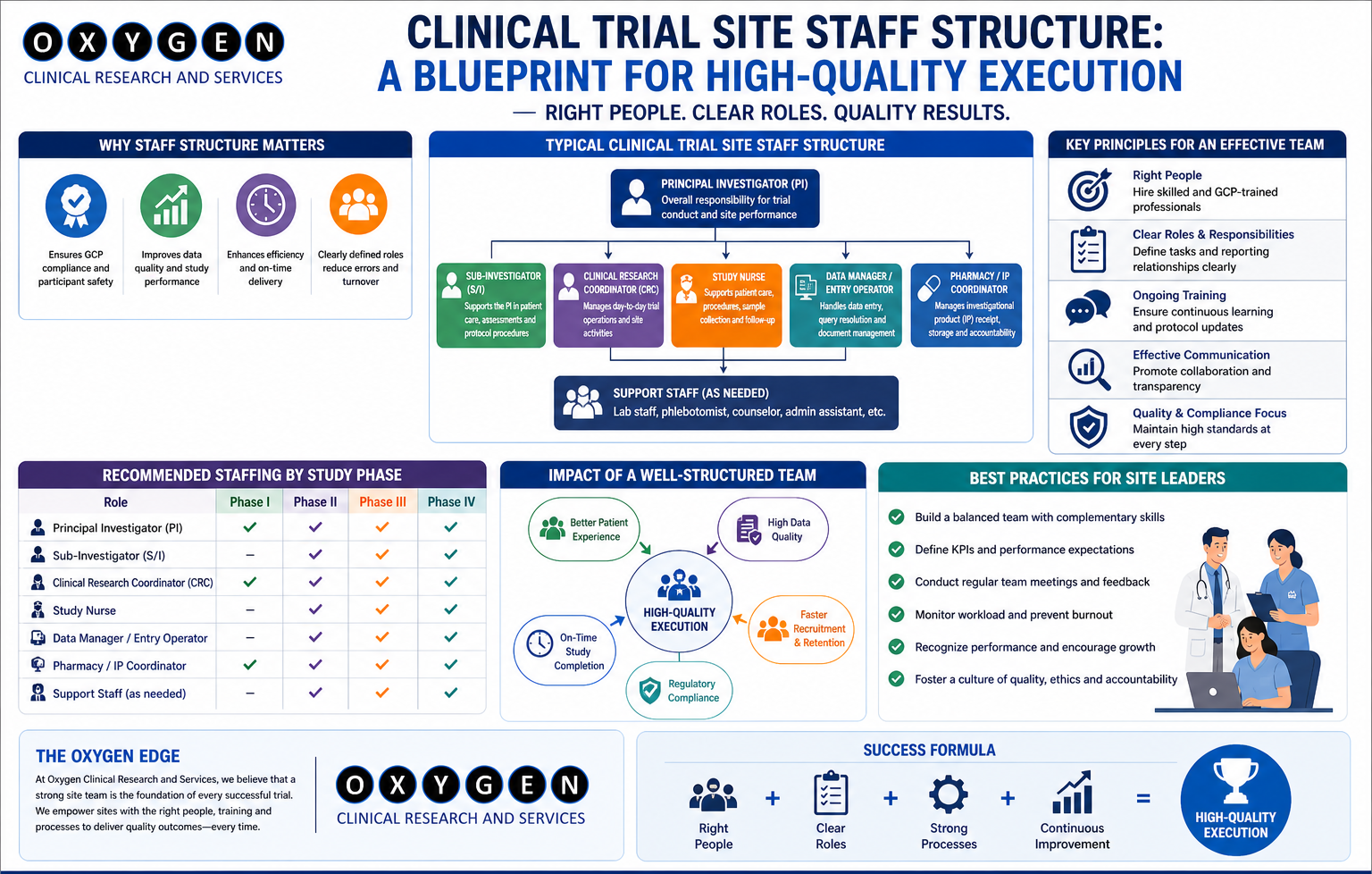

However, a site may have strong metrics today but still collapse under pressure. This is because underlying risks are not always visible in surface-level performance data. For example, operational bottlenecks, staff limitations, or poor patient retention strategies can weaken performance over time. As a result, even high-performing sites may struggle to sustain outcomes under increased workload. Therefore, it is essential to evaluate deeper operational factors rather than relying solely on current metrics.

- Staff turnover (CRCs, PIs).

- Competing trials draining resources.

- Poor communication with CROs.

Resilience is measured by:

- Team stability – CRC tenure >12 months, PI continuity.

- Monitoring visit readiness – Files prepared, queries resolved, deviations documented.

- Audit preparedness – Sites that conduct mock audits pre-inspection.

Practical Checklist: Evaluating Site Resilience During Feasibility

Challenges in Identifying High-Performing Sites – And How to Mitigate Them

Even with clear metrics, sponsors face operational roadblocks. Below are common challenges and mitigation strategies—no sugar coating.

Challenge 1: Feasibility Data is Often Inflated

Sites routinely overestimate capacity during feasibility. A site claiming “10 patients/month” may base this on historical averages across unrelated indications High Performing Clinical Trial.

Mitigation:

- Require site to provide past 12-month enrollment data for the same or similar indication.

- Cross-check with CTRI (Clinical Trials Registry – India) for active trial load.

- Conduct feasibility verification calls with the CRC, not just the PI.

Challenge 2: Local SMOs Lack Oversight Rigor

Many SMOs in India provide site access but don’t enforce standardized processes. Site performance varies widely even within the same SMO network.

Mitigation:

- Partner with SMOs that conduct monthly site performance reviews and share data.

- Require centralized monitoring access to real-time enrollment and data quality dashboards.

- Audit SMO’s internal QA processes—do they perform source verification or only act as gatekeepers?

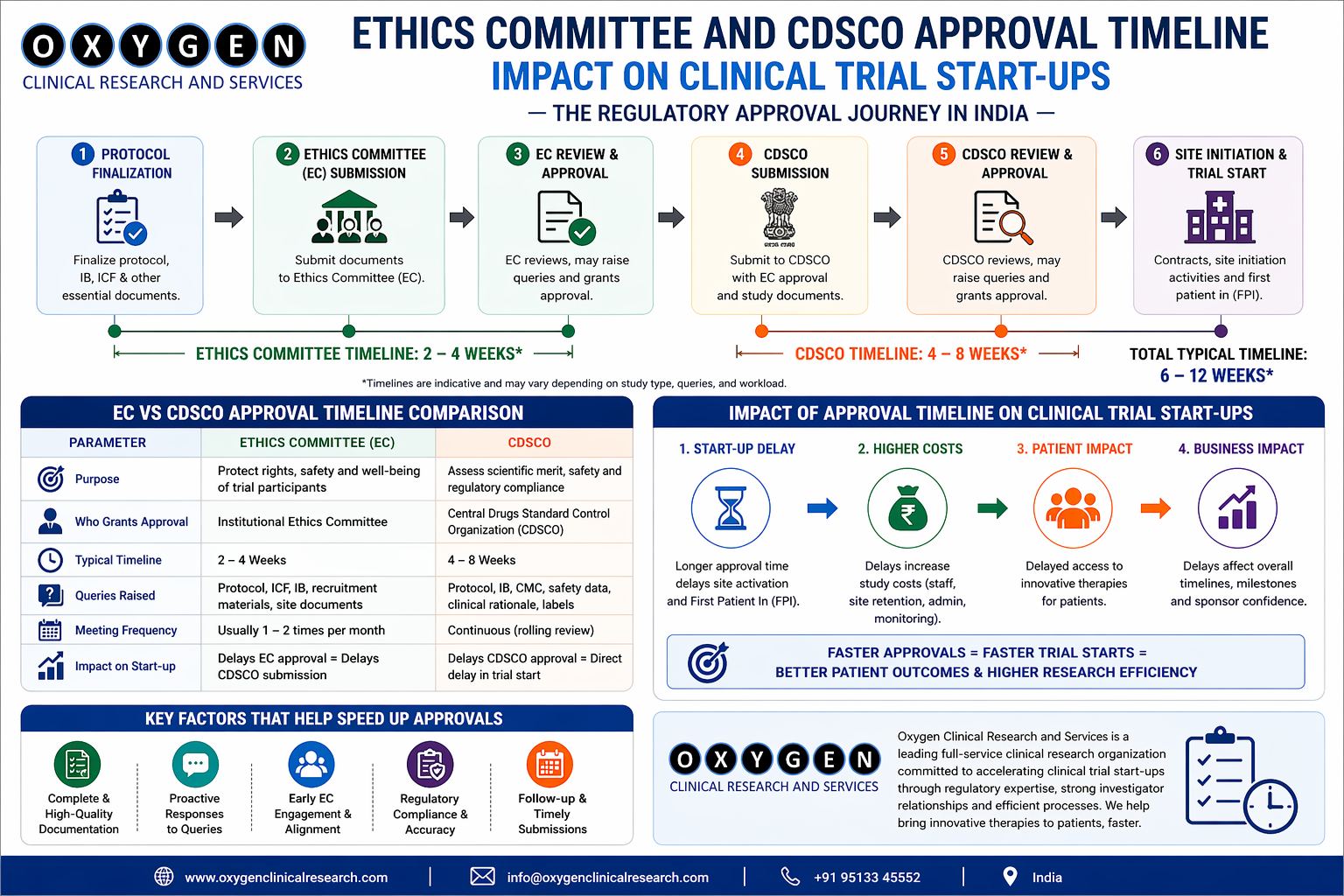

Challenge 3: Ethics Committee Delays Cascade into Timelines

EC approval in India averages 45–60 days. Some sites have ECs that meet monthly—causing 30-day delays per submission.

Mitigation:

- During feasibility, confirm EC meeting frequency and submission deadlines High Performing Clinical Trial.

- Prioritize sites with fast-track ECs (e.g., registered with CDSCO, WHO-approved).

- Submit EC packages in parallel with site agreements—don’t wait High Performing Clinical Trial.

Challenge 4: Patient Follow-Up Breaks in Chronic Studies

In long-term trials, such as those for diabetes and rare diseases, subject retention often declines after Year 1. This is because prolonged participation can lead to fatigue and reduced patient motivation. Moreover, ongoing visit requirements and treatment burdens further contribute to dropout. As a result, retention becomes a growing challenge over time. Therefore, implementing proactive engagement and follow-up strategies is essential to sustain participation. Sites without patient engagement protocols lose 30–40% of subjects High Performing Clinical Trial.

Mitigation:

- Require sites to implement retention plans: transport support, reminder calls, family engagement.

- Track missed visit rate monthly—intervene if >8%.

- Use patient diaries with barcoded entries to verify compliance. High Performing Clinical Trial

The Role of the SMO: Why Partnership Depth Matters

Not all SMOs are equal. However, many organizations function primarily as site brokers, providing access without maintaining operational control. As a result, consistency in trial execution can be compromised. Moreover, the lack of direct oversight may lead to variability in performance across sites. Therefore, relying solely on such models can introduce risks in quality and compliance. The best SMOs act as extension of the sponsor’s clinical operations team, enforcing compliance, standardizing processes, and resolving issues pre-escalation.

For example, Oxygen Clinical Research and Services operates with a centralized quality management system (QMS) that audits sites monthly, standardizes recruitment SOPs, and provides real-time dashboards to sponsors. Moreover, this centralized approach ensures consistency and improves operational efficiency across all sites. In addition, it enhances visibility for sponsors through real-time data access. As a result, sponsor oversight burden is reduced by approximately 40% compared to managing sites independently. Consequently, trial execution becomes more streamlined and scalable.

Key differentiators of a high-capability SMO:

- Site tiering based on performance data – Not all sites in the network are equal. Top SMOs classify sites by therapeutic area strength.

- Centralized monitoring integration – Real-time access to site data, not just monthly reports.

- Dedicated project managers per sponsor – Not shared across multiple clients.

- Proactive risk mitigation – Flagging sites at risk of slow enrollment before milestones are missed.

Conclusion: High-Performing Sites Are Built, Not Discovered

A high-performing site isn’t a static entity. It’s the result of disciplined processes, capable personnel, and proactive oversight. The metrics discussed—enrollment efficiency, data quality, protocol compliance, and operational resilience—are not theoretical. They’re levers that directly impact study timelines. visit our website click here